What?

Some stemmatology

data originally

used in a challenge funded by the

EU Pascal network.

The task is to infer a directed acyclic graph over the documents.

Abstract

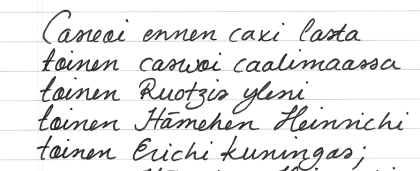

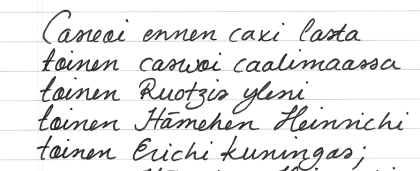

Stemmatology (a.k.a. stemmatics) studies relations among different

variants of a document that have been gradually built from an original

text by copying and modifying earlier versions. The aim of such study

is to reconstruct the family tree (causal graph) of the variants.

We provide a dataset to evaluate methods for computer-assisted

stemmatology. The ground truth is provided, as are evaluation

criteria to allow the ranking of the results of different methods.

We hope this will facilitate the development of novel approaches,

including but not restricted to hierarchical clustering, graphical

modeling, link analysis, phylogenetics, string-matching, etc.

Data

This data-set is a result of an experiment performed by the

organizers and 17 volunteers (see Credits below).

The original text is in old Finnish, and is known as Piispa Henrikin surmavirsi ('The

Death-Psalm of Bishop Henry').

Some of the copied variants are 'missing', i.e., not included in the

given data-set. The copies were made by hand (pencil and paper). Note

that the structure of the possible stemma is not necessarily limited

to a bifurcating tree: more than two immediate descendants (children)

and more than one immediate predecessor (parents) are possible.

(Note that although the documents are named 'A', 'Ab', 'Ac', etc, there is no information in the names

so for instance, 'Ab' is not necessarily any closer to 'Ac' than it is to 'H'.)

The data is available in several formats, feel free to choose the format that best suits your needs.

Note about the encoding: In the numeric and Nexus

(see here)

formatted files we use the convention that the different readings on

each row are sorted alphabetically and replaced by integers

(1,2,3,...) or symbols (A,B,C,...). Hence, the same value/symbol on

different rows usually stands for a different reading. Question marks

denote missing values.

Task

The task is to infer a directed acyclic graph over the documents.

This graph may include hidden (unobserved) documents,

as indeed some original documents are not included in the data.

Your solution method should, when input the provided data, return a graph in the following file format:

<number of nodes>

<node1>

<node2>

<.>

<nodeN>

<e1,1> <e1,2> <.> <e1,N>

<e2,1> <e2,2> <.> <e2,N>

<.> <.> <.> <.>

<.> <.> <.> <.>

<.> <.> <.> <.>

<eN,1> <eN,2> <.> <eN,N>

where <ei,j> can be either -1 (no arrow from node i to node j), 0 (node i and node j are the same), or 1

(an arrow from node i to node j). These are defined over all variables (including hidden variables),

in the order they were given in the node list. See, as an example, the file

estimated.txt.

Evaluation

Since the dataset was obtained through a controlled experiment, the ground truth is known, and any proposed

solution can be compared to this true graph.

Although there of course exists a risk of overlearning, we nevertheless chose to release the

true graph. We are confident that researchers can, at least in the long run,

judge methods on their simplicity in addition to their power to produce something close to the true

graph on this problem. When more datasets become available it will be more and more difficult to

overfit without getting caught.

(Note that the documents 'Da','I', and 'J' have been left out from the true graph. They are strong outliers

and you are free to leave them out of your graphs/analysis as well.)

There are naturally many ways to compare the similarity of any found graph to the true graph. We here

provide two simple criteria that can be used for now (feel free to suggest others if you can motivate why

they would be more appropriate!):

average sign distance for skeleton -

for computing the similarity between two unordered graphs (i.e. first drops the orientations

of all the edges in both the true and the estimated graphs). For each triplet of

documents (X,Y,Z) tests whether the distance (minimum number of links required to get from one to the other) X-Z is

greater than, equal to, or smaller than the distance

Y-Z in the correct graph and in the estimated graph. When they agree, a score of +1 is assigned. If the

distances are unequal in the true graph, half a point is awarded if in the estimated graph they are equal.

If the distances are equal in the true graph, half a point is awarded if in the estimated graph they are

unequal. Note that somewhat more than 50% of the maximum points is obtained by simply returning a graph with no

edges, so most methods should result in values between 50% and 100%.

average sign distance for orientation -

for comparing the similarity with respect to causal orientations. For each pair of

documents (X,Y) checks which one is closer to the closest root in the graph. Points are awarded as in the

above case. Again, typical methods should result in values between 50% and 100%.

We provide an evaluation script written in

Python for performing this evaluation [Note: the

script was broken but is now fixed, October 21, 2009].

The code should be run as

follows:

$ ./tripled.py correct.txt estimated.txt

Note that we welcome scripts in other languages (please send us an email if you have one) for

performing the evaluation, but the correctness of the code should be verified by comparing to the

provided script.

As a baseline result, we have applied the Neighbour-Joining algorithm

implemented in the PAUP*

software (version 4), with default settings. The resulting tree-shaped

stemma can be found in the

file estimated.txt.

The baseline score is:

$ ./tripled.py correct.txt estimated.txt

average sign distance for skeleton 67.887%

average sign distance for orientation 58.467%

The skeleton score (67.887%) is likely to be rather competitive,

but as the Neighbour-Joining algorithm doesn't even attempt to

optimize the orientation, the order of the edges is arbitrary. For this

reason, we anticipate that the orientation score (58.467%) can be

significantly improved, for instance, by causal analysis.

Authors:

-

Teemu Roos (teemu.roos at cs.helsinki.fi),

Helsinki Institute for Information Technology

(main contact person)

-

Tuomas Heikkilä, Department of History, University of Helsinki

- Petri

Myllymäki, Department of Computer Science, University of

Helsinki

- Patrik Hoyer,

Helsinki Institute for Information Technology

Volunteer copyists:

- Áron Bence

- Sampsa Hatakka

- Janica Hindle

- Lauri Hirvonen

- Sari Hirvonen-Skarbö

- Juha Hurme

- Olli Kasurinen

- Elina Kettunen

- Mirja Komulainen

- Katri Korolainen

- Suvi Kukkonen

- Hanna Lundell

- Johanna Mallat

- Rose-Marie Peake

- Samuli Simelius

- Jaakko Tahkokallio

- Juho Wilskman

Transcription:

- Tuula Roos

- Hanna Neuvonen

Advice and support from the following scholars is greatly appreciated:

The original challenge received funding from:

Updated on: November 11, 2008